Paws: Connecting NGOs and Stray Animals in Need

Overview

Paws is a platform built to reduce response time for injured stray animals by connecting people with nearby NGOs and animal hospitals in real time. It combines AI, geolocation, and instant notifications to solve a coordination problem that usually leads to delays.

Inspiration Behind Paws

Our college hosts a state-level hackathon called Smart Bengal Hackathon. During this, my friend Arnab proposed building a system that allows anyone to report injured stray animals quickly and ensure the right NGO is notified without manual effort.

The problem was obvious: people want to help, but don’t know who to contact. NGOs, on the other hand, lack a structured, real-time reporting pipeline.

Out of 510+ teams, we reached the top 30 and made it to the finals. Judges specifically appreciated the practical impact, UI clarity, and how AI was integrated into the workflow.

Tech Stack

The system is built for speed and real-time interaction.

- Frontend: React.js, Material UI, Tailwind CSS (PWA enabled)

- Backend: Django + Django REST Framework

- Realtime: Django Channels

- Notifications: Firebase Cloud Messaging

- AI: Azure Custom Vision (fine-tuned models)

- Media Storage: Cloudinary

- Maps: OpenStreetMap

How It Works

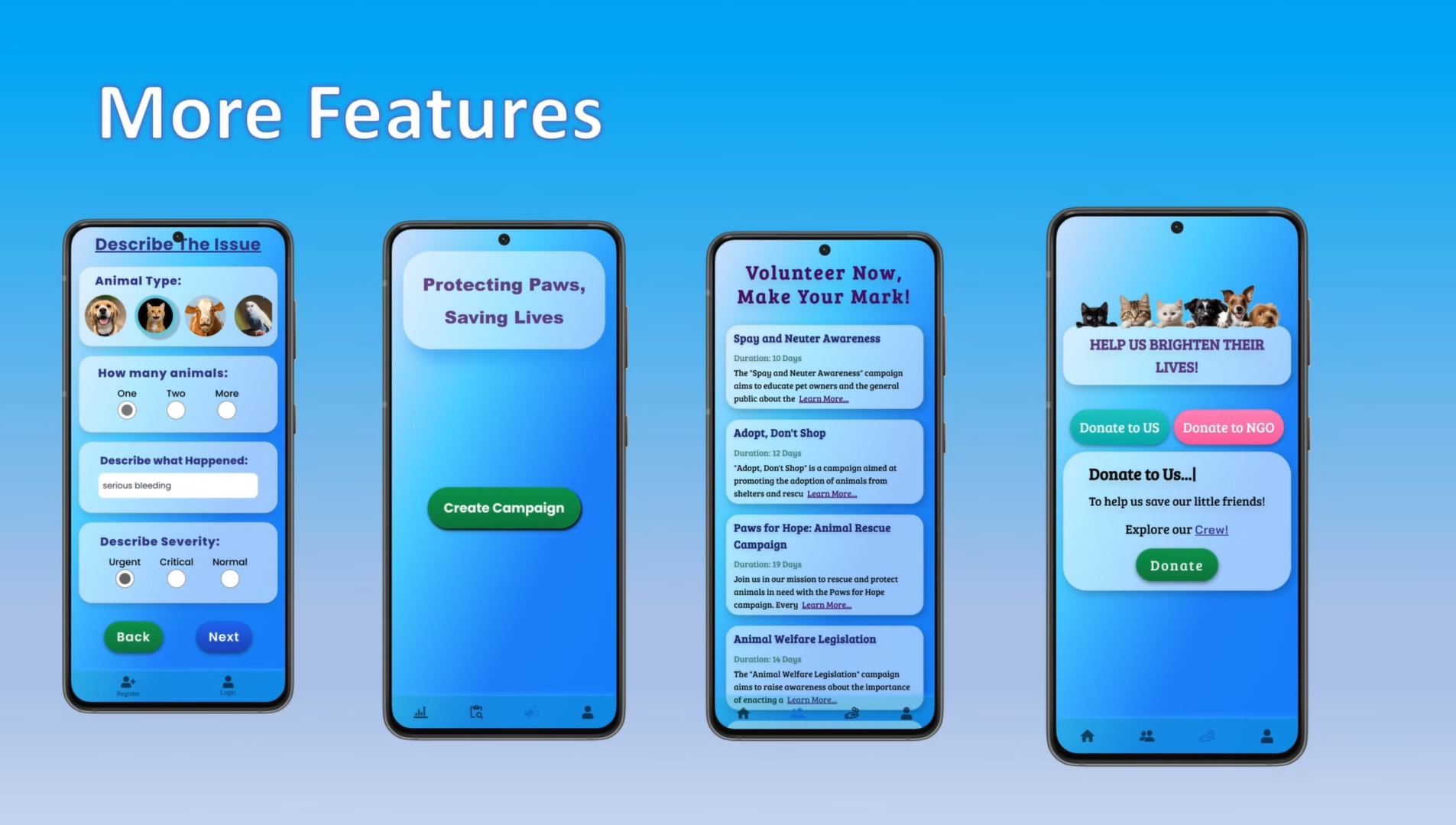

Reporting an Incident

Users upload an image of the injured animal. Location is auto-detected using the browser’s geolocation API, and users can optionally add landmarks and descriptions.

The AI model predicts:

- animal type

- injury severity

Users can correct predictions before submitting. Login is optional.

After submission, the user receives confirmation along with basic guidance on what to do until help arrives.

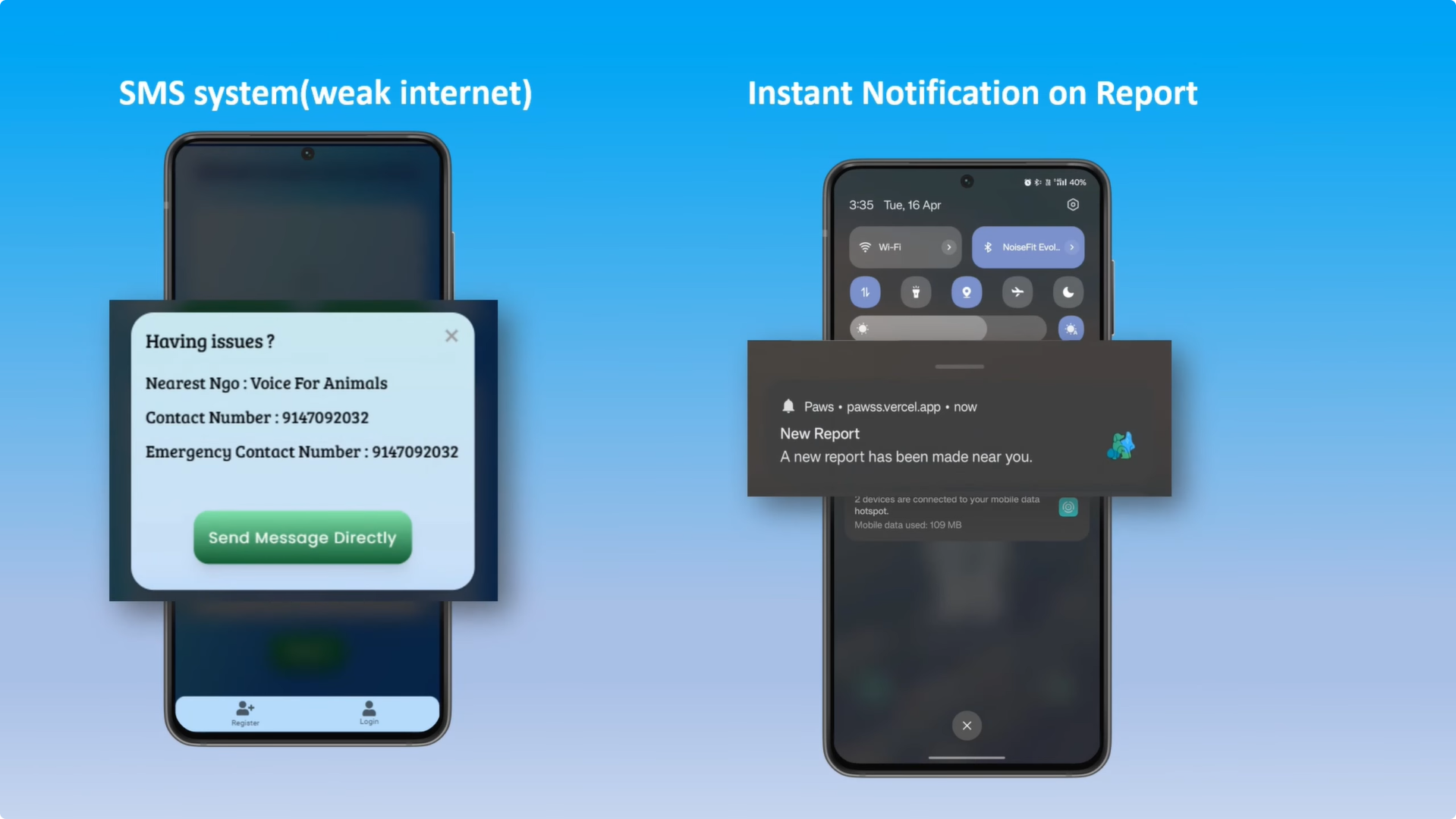

NGO Response System

Once submitted, the backend assigns the case to the nearest NGO and sends a push notification.

NGOs can:

- accept or reject cases

- view details and location

- navigate via Google Maps

- update case status

All updates are reflected in real time to the user.

NGO Analytics

NGOs get insights like:

- reports per day/week/month

- heatmaps of incidents

- common injury types

- average resolution time

Donations and Awareness

Users can donate directly to NGOs or support the platform.

NGOs can post:

- announcements

- events

- awareness campaigns

AI Model: How It Works (Azure Custom Vision)

We used Azure Custom Vision to build and deploy our models without training everything from scratch.

Model Design

We use two models:

1. Animal Detection Model

- Detects animal type (dog, cat, cow, etc.)

- Handles multiple animals in an image

- Outputs bounding boxes and confidence scores

2. Injury Classification Model

- Classifies injury severity (normal, urgent, critical)

- Outputs probability scores

Training Process

- Collected and labeled dataset

- Uploaded to Azure Custom Vision

- Tagged images (bounding boxes + labels)

- Trained using transfer learning

- Evaluated using standard metrics

Performance

-

Animal Model

- Precision: ~95.8%

- Recall: ~91.0%

- Accuracy: ~95.7%

-

Injury Model

- Precision: ~83.3%

- Recall: ~71.4%

- Accuracy: ~91.0%

The injury model is weaker due to limited and noisy data.

Inference Flow

- User uploads image

- Backend sends it to Azure API

- Model returns predictions

- Results shown to user

- User can override

This keeps the system fast while avoiding hard dependency on AI accuracy.

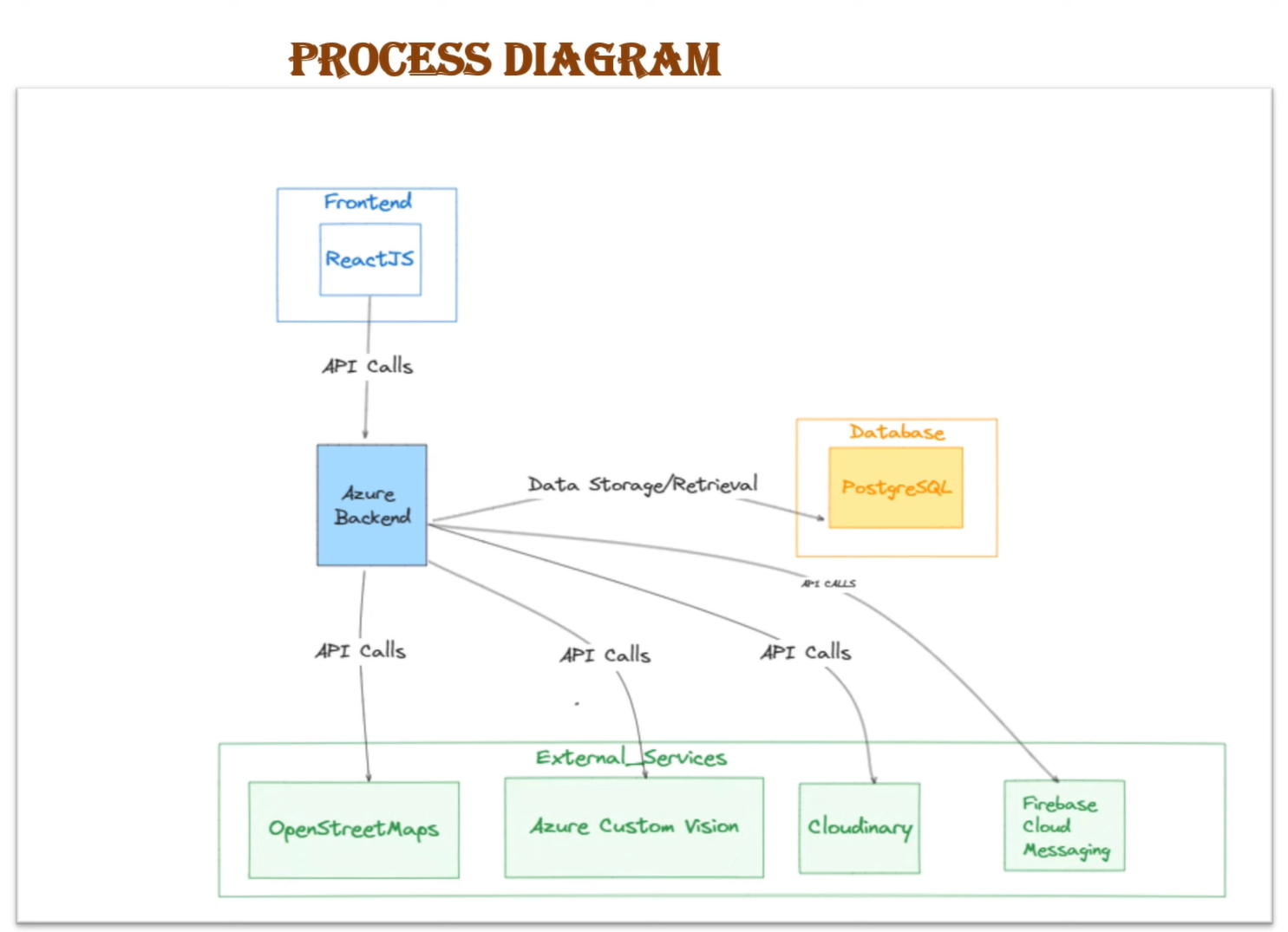

System Architecture (Process Diagram)

This diagram shows how different components interact.

Explanation

- The React frontend sends API requests to the backend

- The backend (hosted on Azure) acts as the central controller

- It interacts with:

- PostgreSQL (data storage)

- Azure Custom Vision (AI inference)

- Cloudinary (image storage)

- Firebase (notifications)

- OpenStreetMap (location services)

This separation ensures:

- modular architecture

- easy scaling of services

- independent failure handling

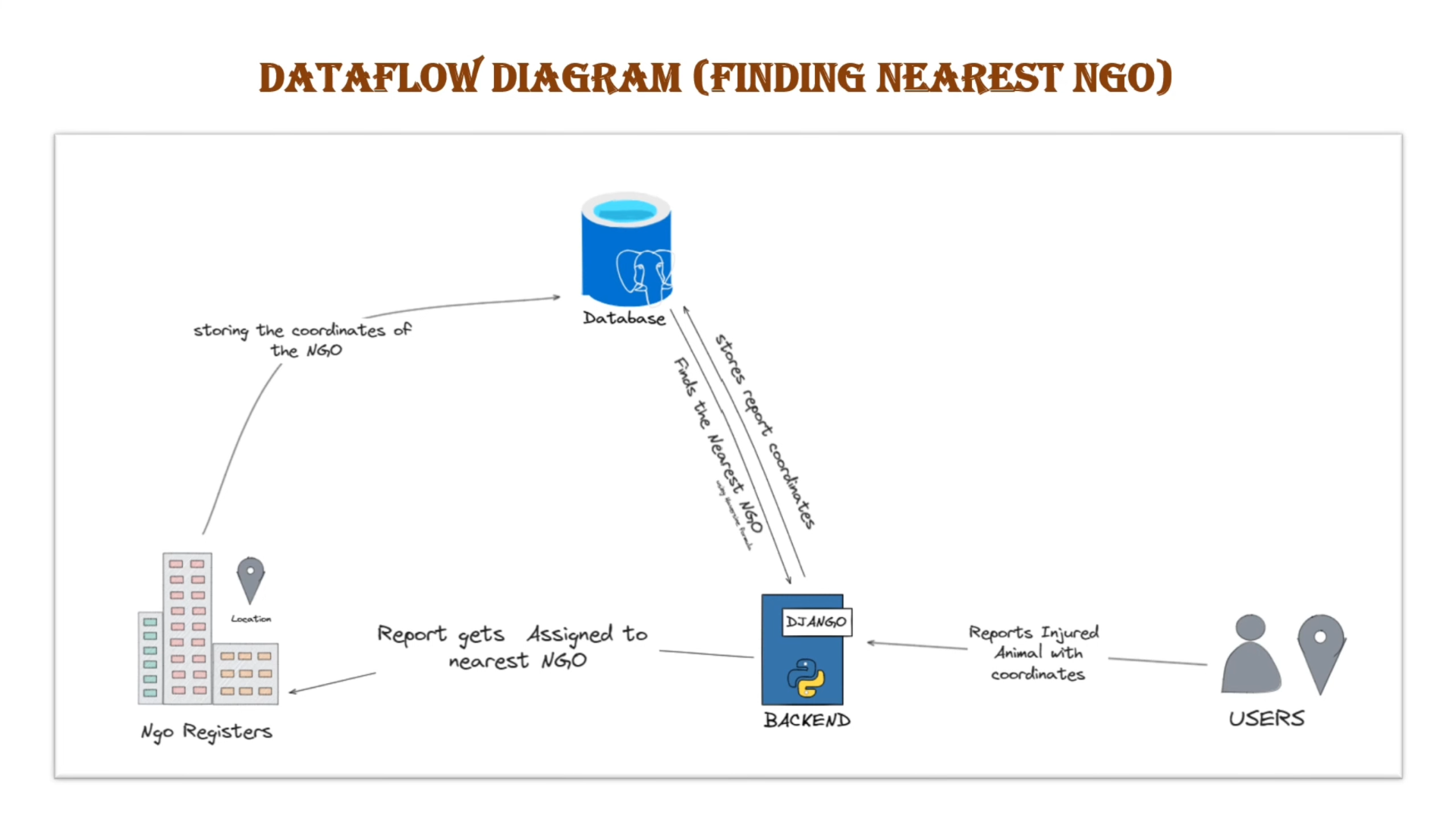

Data Flow (Finding Nearest NGO)

Explanation

- NGOs register and their coordinates are stored in the database

- User submits a report with location

- Backend fetches all NGO locations

- Distance is calculated (via Haversine formula)

- Nearest NGO is selected

- Case is assigned and notification is sent

This ensures minimal response time without manual intervention.

Conclusion

Paws is not just a hackathon project—it’s a system that can realistically reduce response time for injured stray animals.

What works:

- simple reporting flow

- real-time NGO coordination

- AI-assisted classification

What doesn’t yet:

- NGO reliability isn’t guaranteed

- AI struggles with messy real-world images

- scaling beyond controlled environments

Fix those, and this becomes a real product—not just a good prototype.

GitHub

https://github.com/Innovateninjas/Paws-frontend/